Penguin Solutions Inc. has introduced what it describes as the industry’s first production-ready KV cache server built on Compute Express Link (CXL) memory technology, aimed at addressing the growing “memory wall” challenge in AI inferencing. The new system, branded as the MemoryAI KV cache server, is designed to enhance performance for enterprise-scale AI workloads, including emerging agentic AI applications.

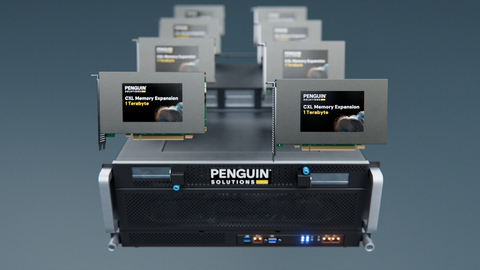

The server delivers up to 11 TB of CXL-based memory, combining 3 TB of DDR5 system memory with up to eight 1 TB CXL add-in cards. This architecture is intended to significantly expand memory availability to GPUs, which are increasingly constrained by memory bandwidth and capacity limitations in large-scale AI deployments.

As AI models continue to grow in size and complexity, inference workloads have become predominantly memory-bound rather than compute-bound. Industry estimates suggest that inference tasks are approximately 70% dependent on memory performance, compared to 30% on compute resources. This imbalance has led to bottlenecks, reduced GPU utilization, and increased latency in delivering AI-generated outputs.

Penguin Solutions’ new platform seeks to address these challenges by accelerating memory-intensive processes. By increasing available memory and enabling faster access to key-value (KV) cache data, the system aims to reduce latency, improve throughput, and shorten time-to-first-token (TTFT), a critical performance metric in real-time AI applications.

Phil Pokorny, Chief Technology Officer at Penguin Solutions, noted that CXL-enabled KV cache technology plays a crucial role in improving end-to-end token throughput and enabling consistent service-level performance across enterprise environments. He added that the solution is designed to support increasing demands driven by larger models, longer context windows, and higher concurrency requirements.

The MemoryAI KV cache server also introduces a new tier of disaggregated memory within AI clusters. By supplementing high-bandwidth memory (HBM) and system DRAM, the CXL-based architecture offers speeds significantly faster than traditional NVMe-based storage approaches. This enables more efficient offloading of KV cache data and reduces redundant computations.

In practical terms, the system is positioned to support applications requiring large context windows and low latency, such as real-time financial data analysis, retrieval-augmented generation (RAG) across large datasets, and regulatory compliance processing.

The platform is compatible with NVIDIA’s Dynamo software architecture, allowing integration with existing AI infrastructure for KV cache memory offloading. Additionally, the server is designed to improve cost and power efficiency by optimizing GPU utilization and reducing the need for over-provisioning compute resources.

Penguin Solutions indicated that customers are already deploying the MemoryAI KV cache server in production environments to enhance cluster performance and meet stringent latency requirements.