Rambus Inc. has introduced a new high-bandwidth memory controller solution designed to support the growing performance requirements of artificial intelligence (AI) and high-performance computing (HPC) systems. The company announced the launch of its industry-leading HBM4E Memory Controller IP, a development aimed at enabling next-generation AI accelerators and graphics processing units to handle increasingly demanding memory bandwidth workloads.

The new controller extends Rambus’ portfolio of high-bandwidth memory (HBM) intellectual property and is designed to address the rapid rise in data processing requirements driven by advanced AI models, large-scale machine learning workloads, and graphics-intensive applications. As AI systems continue to scale in complexity, memory bandwidth has become a critical factor influencing the performance and efficiency of processors used in AI training and inference environments.

“Given the insatiable bandwidth demands of AI, it’s imperative for the memory ecosystem to continue aggressively advancing memory performance,” said Simon Blake-Wilson, senior vice president and general manager of Silicon IP at Rambus. “As a leading silicon IP provider for AI applications, we are bringing the industry’s leading HBM4E Controller IP solution to the market as a key enabler for breakthrough performance in next-generation AI processors and accelerators.”

The Rambus HBM4E Controller IP is capable of supporting data transfer rates of up to 16 gigabits per second per pin. This allows the controller to deliver a peak throughput of approximately 4.1 terabytes per second for each HBM4E memory device. In systems integrating eight HBM4E memory stacks, the architecture can provide more than 32 terabytes per second of memory bandwidth, a level designed to support the intensive computational demands of emerging AI workloads.

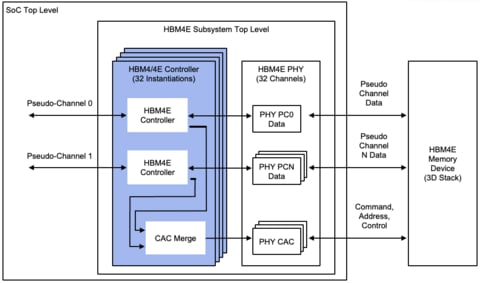

The controller can be integrated with third-party standard or TSV-based PHY solutions to create a complete HBM4E memory subsystem. These subsystems can be deployed within advanced 2.5D or 3D packaging architectures, commonly used in modern AI system-on-chip (SoC) designs and custom base die solutions.

Industry stakeholders have emphasized the importance of advancing memory performance to keep pace with the rapid evolution of AI hardware.

“HBM4E represents a significant milestone for HBM technology, delivering unprecedented performance for advanced AI and HPC workloads,” said Ben Rhew, corporate vice president and head of the Foundry IP Development Team at Samsung Electronics. “HBM4E IP solutions will be essential for broad industry adoption.”

Reiner Pope, co-founder and CEO at MatX, highlighted the role of memory bandwidth in AI performance, noting that it remains one of the primary bottlenecks in large language model processing. Analysts also point to increasing demand for high-density, high-performance memory solutions as AI processors continue to scale.

According to Soo Kyoum Kim, program associate vice president for Memory Semiconductors at IDC, continued innovation in HBM technology will be critical to supporting the next generation of AI hardware platforms.